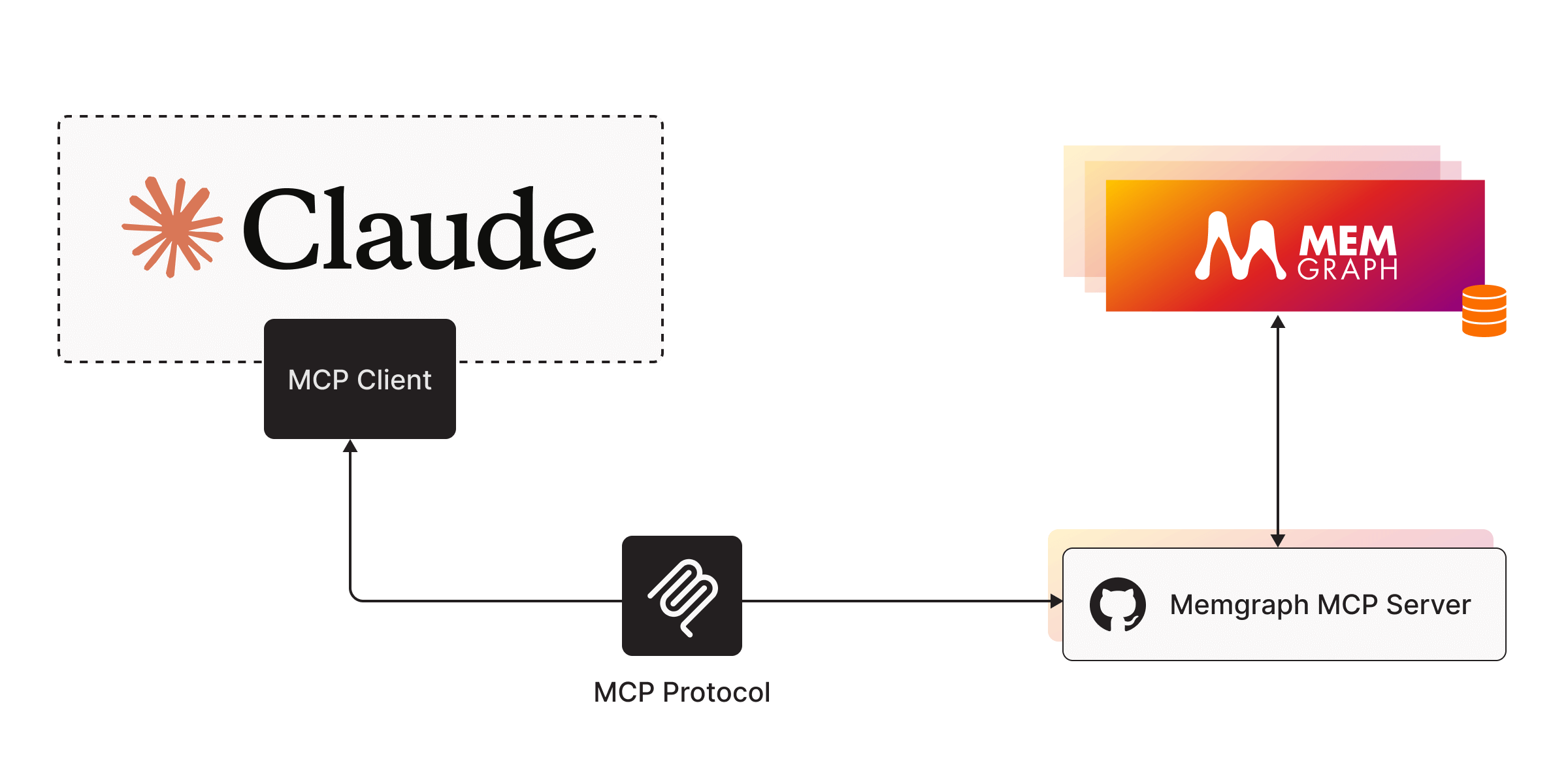

The Memgraph MCP Server acts as a bridge between Memgraph and LLMs, enabling AI-driven database interactions through a lightweight implementation. With this server, you can:

Run queries: Execute Cypher queries against Memgraph using the

run_query()toolAccess schema information: Retrieve database structure details with the

get_schema()resource (requires--schema-info-enabled=True)Interact conversationally: Connect LLMs like Claude to interact with your database directly from a chat interface

Click on "Install Server".

Wait a few minutes for the server to deploy. Once ready, it will show a "Started" state.

In the chat, type

@followed by the MCP server name and your instructions, e.g., "@Memgraph MCP Servershow me all users who purchased product X in the last month"

That's it! The server will respond to your query, and you can continue using it as needed.

Here is a step-by-step guide with screenshots.

This repository has been merged into the

🚀 Memgraph MCP Server

Memgraph MCP Server is a lightweight server implementation of the Model Context Protocol (MCP) designed to connect Memgraph with LLMs.

⚡ Quick start

1. Run Memgraph MCP Server

Install

uvand createvenvwithuv venv. Activate virtual environment with.venv\Scripts\activate.Install dependencies:

uv add "mcp[cli]" httpxRun Memgraph MCP server:

uv run server.py.

2. Run MCP Client

Install Claude for Desktop.

Add the Memgraph server to Claude config:

MacOS/Linux

Windows

Example config:

You may need to put the full path to the uv executable in the command field. You can get this by running which uv on MacOS/Linux or where uv on Windows. Make sure you pass in the absolute path to your server.

3. Chat with the database

Run Memgraph MAGE:

docker run -p 7687:7687 memgraph/memgraph-mage --schema-info-enabled=TrueThe

--schema-info-enabledconfiguration setting is set toTrueto allow LLM to runSHOW SCHEMA INFOquery.Open Claude Desktop and see the Memgraph tools and resources listed. Try it out! (You can load dummy data from Memgraph Lab Datasets)

Related MCP server: mcp-graphql

🔧Tools

run_query()

Run a Cypher query against Memgraph.

🗃️ Resources

get_schema()

Get Memgraph schema information (prerequisite: --schema-info-enabled=True).

🗺️ Roadmap

The Memgraph MCP Server is just at its beginnings. We're actively working on expanding its capabilities and making it even easier to integrate Memgraph into modern AI workflows. In the near future, we'll be releasing a TypeScript version of the server to better support JavaScript-based environments. Additionally, we plan to migrate this project into our central AI Toolkit repository, where it will live alongside other tools and integrations for LangChain, LlamaIndex, and MCP. Our goal is to provide a unified, open-source toolkit that makes it seamless to build graph-powered applications and intelligent agents with Memgraph at the core.