Offers community support through Discord channel

References GitHub for project hosting, stars, forks, and issue tracking

Supports interaction with Hugging Face datasets, enabling evaluation of data quality for datasets hosted on the platform

Provides evaluation capabilities for LaTeX formulas in datasets

Supports evaluation of Markdown formatting in datasets and content extraction quality assessment

Acknowledges MLflow as a related project in the model evaluation ecosystem

Integrates with OpenAI models like GPT-4o for LLM-based data quality assessment using various evaluation prompts

Integrates with pre-commit for code quality checks

Enables installation through PyPI package registry

Changelog

- 2024/12/27: Project Initialization

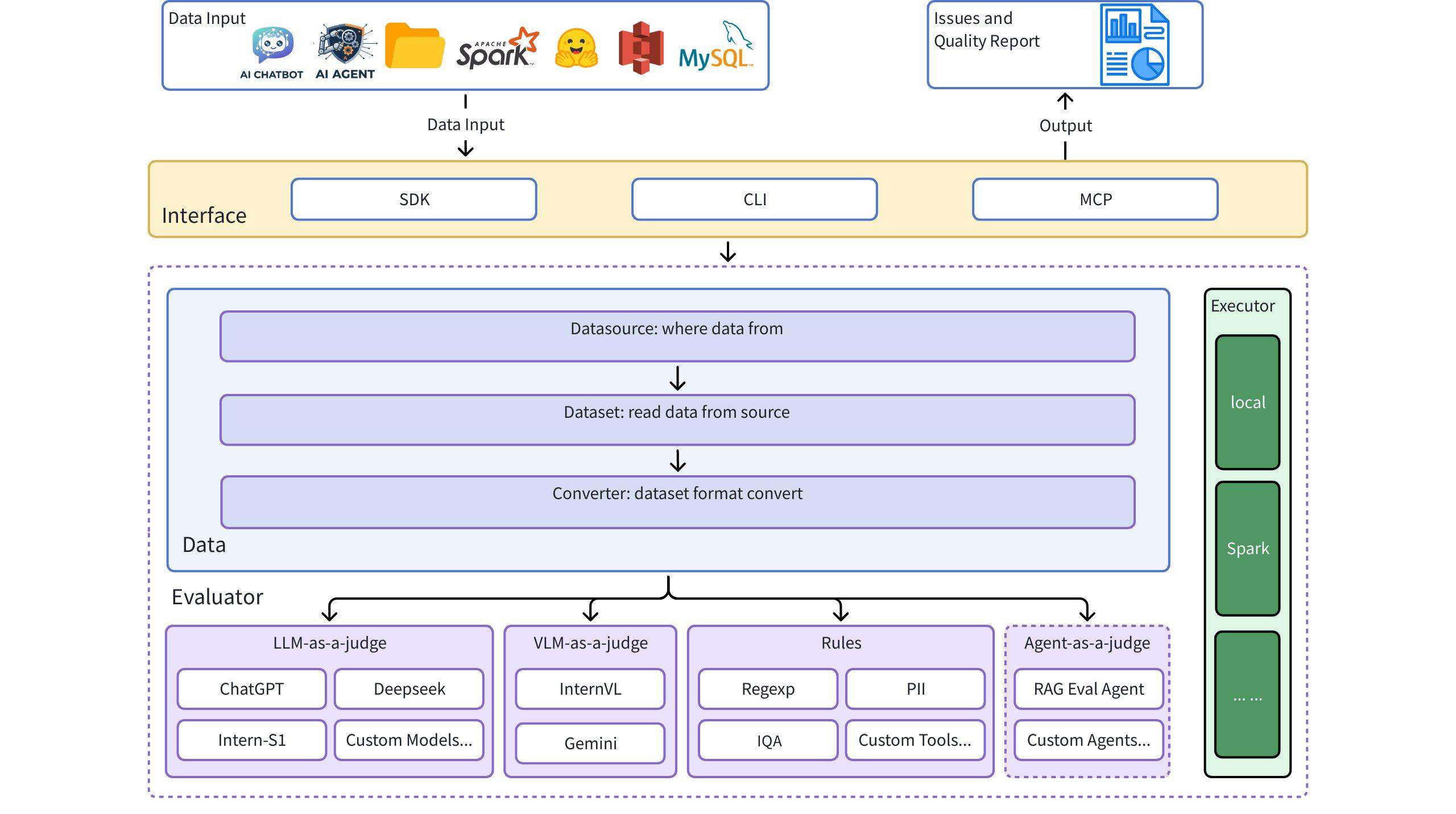

Introduction

Dingo is a data quality evaluation tool that helps you automatically detect data quality issues in your datasets. Dingo provides a variety of built-in rules and model evaluation methods, and also supports custom evaluation methods. Dingo supports commonly used text datasets and multimodal datasets, including pre-training datasets, fine-tuning datasets, and evaluation datasets. In addition, Dingo supports multiple usage methods, including local CLI and SDK, making it easy to integrate into various evaluation platforms, such as OpenCompass.

Architecture Diagram

Quick Start

Installation

Example Use Cases

1. Using Evaluate Core

2. Evaluate Local Text File (Plaintext)

3. Evaluate Hugging Face Dataset

4. Evaluate JSON/JSONL Format

5. Using LLM for Evaluation

Command Line Interface

Evaluate with Rule Sets

Evaluate with LLM (e.g., GPT-4o)

Example config_gpt.json:

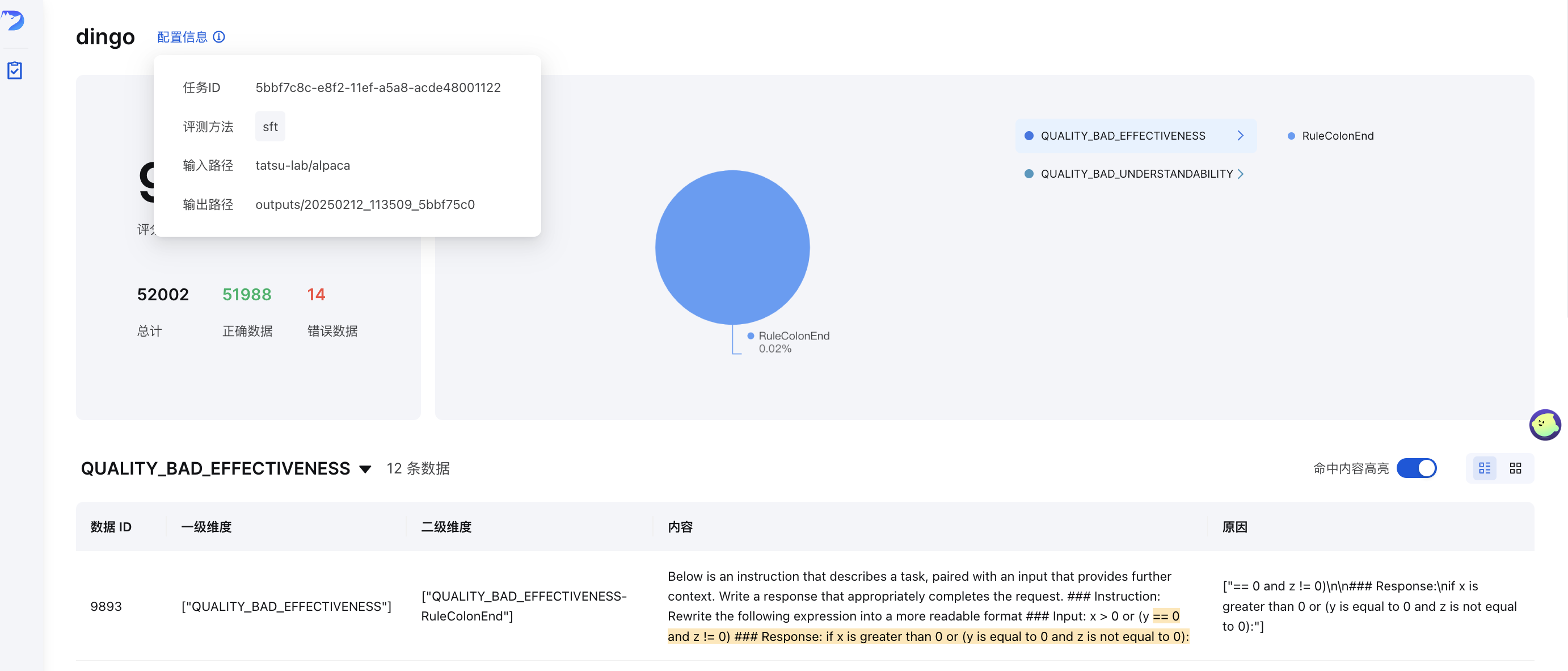

GUI Visualization

After evaluation (with save_data=True), a frontend page will be automatically generated. To manually start the frontend:

Where output_directory contains the evaluation results with a summary.json file.

Online Demo

Try Dingo on our online demo: (Hugging Face)🤗

Data Quality Metrics

Dingo classifies data quality issues into 7 dimensions of Quality Metrics. Each dimension can be evaluated using both rule-based methods and LLM-based prompts:

| Quality Metric | Description | Rule Examples | LLM Prompt Examples |

|---|---|---|---|

| COMPLETENESS | Checks if data is incomplete or missing | RuleColonEnd, RuleContentNull | Evaluates if text abruptly ends with a colon or ellipsis, has mismatched parentheses, or missing critical components |

| EFFECTIVENESS | Checks if data is meaningful and properly formatted | RuleAbnormalChar, RuleHtmlEntity, RuleSpecialCharacter | Detects garbled text, words stuck together without spaces, and text lacking proper punctuation |

| FLUENCY | Checks if text is grammatically correct and reads naturally | RuleAbnormalNumber, RuleNoPunc, RuleWordStuck | Identifies excessively long words, text fragments without punctuation, or content with chaotic reading order |

| RELEVANCE | Detects irrelevant content within the data | RuleHeadWord variants for different languages | Examines for irrelevant information like citation details, headers/footers, entity markers, HTML tags |

| SECURITY | Identifies sensitive information or value conflicts | RuleIDCard, RuleUnsafeWords | Checks for personal information, and content related to gambling, pornography, political issues |

| SIMILARITY | Detects repetitive or highly similar content | RuleDocRepeat | Evaluates text for consecutive repeated content or multiple occurrences of special characters |

| UNDERSTANDABILITY | Assesses how easily data can be interpreted | RuleCapitalWords | Ensures LaTeX formulas and Markdown are correctly formatted, with proper segmentation and line breaks |

LLM Quality Assessment

Dingo provides several LLM-based assessment methods defined by prompts in the dingo/model/prompt directory. These prompts are registered using the prompt_register decorator and can be combined with LLM models for quality evaluation:

Text Quality Assessment Prompts

| Prompt Type | Metric | Description |

|---|---|---|

TEXT_QUALITY_V2, TEXT_QUALITY_V3 | Various quality dimensions | Comprehensive text quality evaluation covering effectiveness, relevance, completeness, understandability, similarity, fluency, and security |

QUALITY_BAD_EFFECTIVENESS | Effectiveness | Detects garbled text and anti-crawling content |

QUALITY_BAD_SIMILARITY | Similarity | Identifies text repetition issues |

WORD_STICK | Fluency | Checks for words stuck together without proper spacing |

CODE_LIST_ISSUE | Completeness | Evaluates code blocks and list formatting issues |

UNREAD_ISSUE | Effectiveness | Detects unreadable characters due to encoding issues |

3H Assessment Prompts (Honest, Helpful, Harmless)

| Prompt Type | Metric | Description |

|---|---|---|

QUALITY_HONEST | Honesty | Evaluates if responses provide accurate information without fabrication or deception |

QUALITY_HELPFUL | Helpfulness | Assesses if responses address questions directly and follow instructions appropriately |

QUALITY_HARMLESS | Harmlessness | Checks if responses avoid harmful content, discriminatory language, and dangerous assistance |

Domain-Specific Assessment Prompts

| Prompt Type | Metric | Description |

|---|---|---|

TEXT_QUALITY_KAOTI | Exam question quality | Specialized assessment for evaluating the quality of exam questions, focusing on formula rendering, table formatting, paragraph structure, and answer formatting |

Html_Abstract | HTML extraction quality | Compares different methods of extracting Markdown from HTML, evaluating completeness, formatting accuracy, and semantic coherence |

DATAMAN_ASSESSMENT | Data Quality & Domain | Evaluates pre-training data quality using the DataMan methodology (14 standards, 15 domains). Assigns a score (0/1), domain type, quality status, and reason. |

Classification Prompts

| Prompt Type | Metric | Description |

|---|---|---|

CLASSIFY_TOPIC | Topic Categorization | Classifies text into categories like language processing, writing, code, mathematics, role-play, or knowledge Q&A |

CLASSIFY_QR | Image Classification | Identifies images as CAPTCHA, QR code, or normal images |

Image Assessment Prompts

| Prompt Type | Metric | Description |

|---|---|---|

IMAGE_RELEVANCE | Image Relevance | Evaluates if an image matches reference image in terms of face count, feature details, and visual elements |

Using LLM Assessment in Evaluation

To use these assessment prompts in your evaluations, specify them in your configuration:

You can customize these prompts to focus on specific quality dimensions or to adapt to particular domain requirements. When combined with appropriate LLM models, these prompts enable comprehensive evaluation of data quality across multiple dimensions.

Rule Groups

Dingo provides pre-configured rule groups for different types of datasets:

| Group | Use Case | Example Rules |

|---|---|---|

default | General text quality | RuleColonEnd, RuleContentNull, RuleDocRepeat, etc. |

sft | Fine-tuning datasets | Rules from default plus RuleLineStartWithBulletpoint |

pretrain | Pre-training datasets | Comprehensive set of 20+ rules including RuleAlphaWords, RuleCapitalWords, etc. |

To use a specific rule group:

Feature Highlights

Multi-source & Multi-modal Support

- Data Sources: Local files, Hugging Face datasets, S3 storage

- Data Types: Pre-training, fine-tuning, and evaluation datasets

- Data Modalities: Text and image

Rule-based & Model-based Evaluation

- Built-in Rules: 20+ general heuristic evaluation rules

- LLM Integration: OpenAI, Kimi, and local models (e.g., Llama3)

- Custom Rules: Easily extend with your own rules and models

- Security Evaluation: Perspective API integration

Flexible Usage

- Interfaces: CLI and SDK options

- Integration: Easy integration with other platforms

- Execution Engines: Local and Spark

Comprehensive Reporting

- Quality Metrics: 7-dimensional quality assessment

- Traceability: Detailed reports for anomaly tracking

User Guide

Custom Rules, Prompts, and Models

If the built-in rules don't meet your requirements, you can create custom ones:

Custom Rule Example

Custom LLM Integration

See more examples in:

Execution Engines

Local Execution

Spark Execution

Evaluation Reports

After evaluation, Dingo generates:

- Summary Report (

summary.json): Overall metrics and scores - Detailed Reports: Specific issues for each rule violation

Example summary:

MCP Server (Experimental)

Dingo includes an experimental Model Context Protocol (MCP) server. For details on running the server and integrating it with clients like Cursor, please see the dedicated documentation:

Dingo MCP Server Documentation (README_mcp.md)

Research & Publications

- "Comprehensive Data Quality Assessment for Multilingual WebData" : WanJuanSiLu: A High-Quality Open-Source Webtext Dataset for Low-Resource Languages

- "Pre-training data quality using the DataMan methodology" : DataMan: Data Manager for Pre-training Large Language Models

Future Plans

- Richer graphic and text evaluation indicators

- Audio and video data modality evaluation

- Small model evaluation (fasttext, Qurating)

- Data diversity evaluation

Limitations

The current built-in detection rules and model methods focus on common data quality problems. For specialized evaluation needs, we recommend customizing detection rules.

Acknowledgments

Contribution

We appreciate all the contributors for their efforts to improve and enhance Dingo. Please refer to the Contribution Guide for guidance on contributing to the project.

License

This project uses the Apache 2.0 Open Source License.

Citation

If you find this project useful, please consider citing our tool:

Related MCP Servers

- AsecurityAlicenseAqualityMiro MCP server, exposing all functionalities available in official Miro SDK.Last updated -97665TypeScriptApache 2.0

- PythonMIT License

- JavaScriptMIT License

- PythonMIT License