MCP Wolfram Alpha (Server + Client)

Integrieren Sie Wolfram Alpha nahtlos in Ihre Chat-Anwendungen.

Dieses Projekt implementiert einen MCP-Server (Model Context Protocol), der für die Schnittstelle zur Wolfram Alpha API konzipiert ist. Chat-basierte Anwendungen können damit rechnerische Abfragen durchführen und strukturiertes Wissen abrufen, was erweiterte Konversationsfunktionen ermöglicht.

Enthalten ist ein MCP-Client-Beispiel, das Gemini über LangChain verwendet und zeigt, wie große Sprachmodelle mit dem MCP-Server verbunden werden, um Echtzeitinteraktionen mit der Wissens-Engine von Wolfram Alpha zu ermöglichen.

Merkmale

Wolfram|Alpha-Integration für Mathematik, Wissenschaft und Datenabfragen.

Modulare Architektur. Leicht erweiterbar, um zusätzliche APIs und Funktionen zu unterstützen.

Multi-Client-Unterstützung: Nahtlose Handhabung von Interaktionen von mehreren Clients oder Schnittstellen.

MCP-Client-Beispiel mit Gemini (über LangChain).

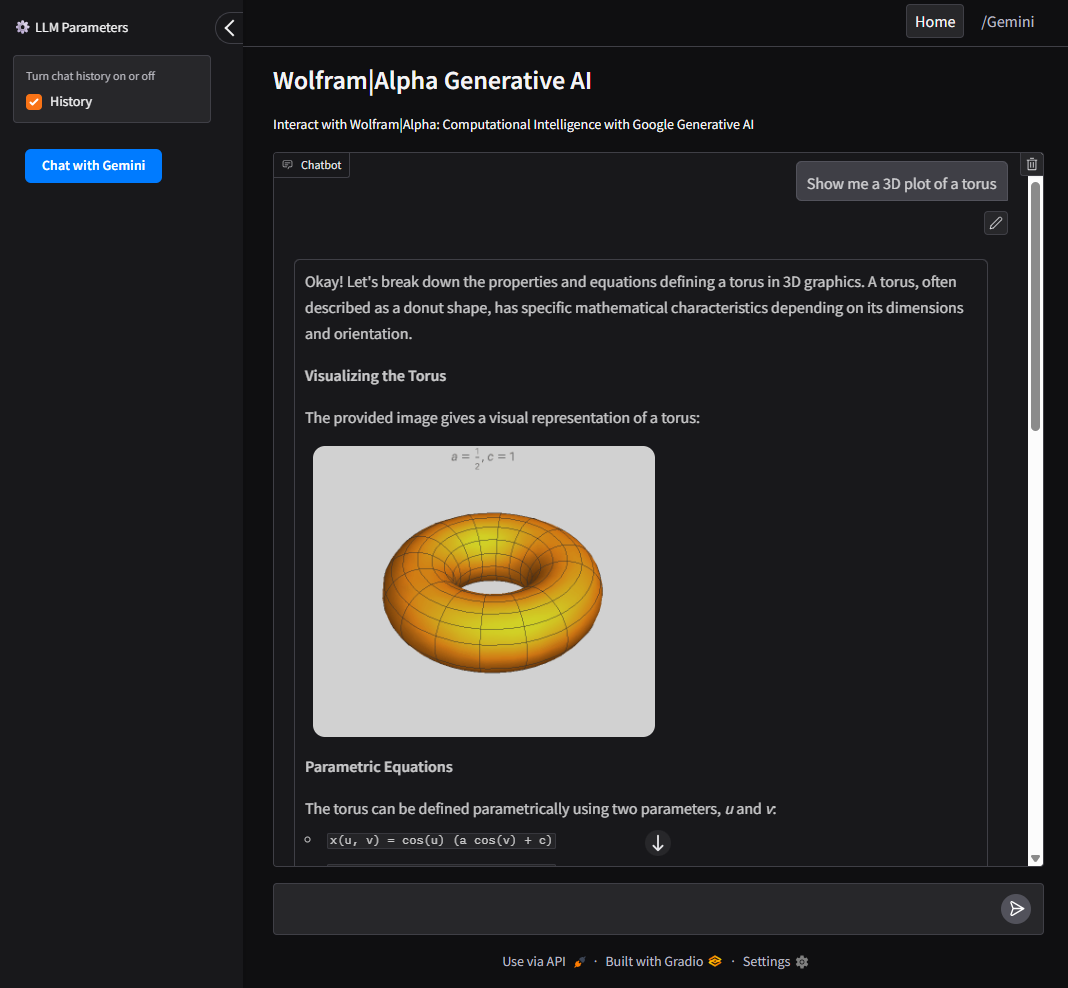

UI-Unterstützung mit Gradio für eine benutzerfreundliche Weboberfläche zur Interaktion mit Google AI und dem Wolfram Alpha MCP-Server.

Related MCP server: Maya MCP

Installation

Klonen Sie das Repo

Einrichten von Umgebungsvariablen

Erstellen Sie eine .env-Datei basierend auf dem Beispiel:

WOLFRAM_API_KEY=Ihre_Wolframalpha_App-ID

GeminiAPI=Ihr_Google_Gemini_API-Schlüssel (Optional, wenn Sie die Client-Methode unten verwenden.)

Installationsvoraussetzungen

Konfiguration

Zur Verwendung mit dem VSCode MCP-Server:

Erstellen Sie eine Konfigurationsdatei unter

.vscode/mcp.jsonin Ihrem Projektstamm.Verwenden Sie das in

configs/vscode_mcp.jsonbereitgestellte Beispiel als Vorlage.Weitere Einzelheiten finden Sie im VSCode MCP Server Guide .

Zur Verwendung mit Claude Desktop:

Client-Verwendungsbeispiel

Dieses Projekt umfasst einen LLM-Client, der mit dem MCP-Server kommuniziert.

Mit Gradio UI ausführen

Erforderlich: GeminiAPI

Bietet eine lokale Weboberfläche zur Interaktion mit Google AI und Wolfram Alpha.

So führen Sie den Client direkt über die Befehlszeile aus:

Docker

So erstellen und führen Sie den Client in einem Docker-Container aus:

Benutzeroberfläche

Intuitive, mit Gradio erstellte Benutzeroberfläche zur Interaktion mit Google AI (Gemini) und dem Wolfram Alpha MCP-Server.

Ermöglicht Benutzern das Wechseln zwischen Wolfram Alpha, Google AI (Gemini) und Abfrageverlauf.

Als CLI-Tool ausführen

Erforderlich: GeminiAPI

So führen Sie den Client direkt über die Befehlszeile aus:

Docker

So erstellen und führen Sie den Client in einem Docker-Container aus: