MCP Server for Vertex AI Search

Integrates with Google's Vertex AI Search to enable document searching using Gemini with grounding. Allows querying across one or multiple Vertex AI data stores to retrieve information from private data sources.

Click on "Install Server".

Wait a few minutes for the server to deploy. Once ready, it will show a "Started" state.

In the chat, type

@followed by the MCP server name and your instructions, e.g., "@MCP Server for Vertex AI Searchsearch for recent quarterly financial reports"

That's it! The server will respond to your query, and you can continue using it as needed.

Here is a step-by-step guide with screenshots.

MCP Server for Vertex AI Search

This is a MCP server to search documents using Vertex AI.

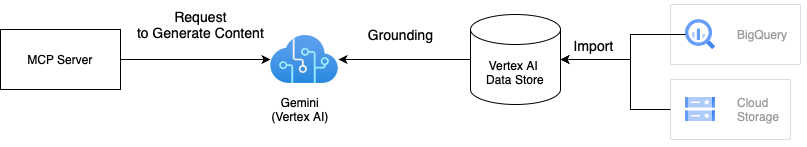

Architecture

This solution uses Gemini with Vertex AI grounding to search documents using your private data. Grounding improves the quality of search results by grounding Gemini's responses in your data stored in Vertex AI Datastore. We can integrate one or multiple Vertex AI data stores to the MCP server. For more details on grounding, refer to Vertex AI Grounding Documentation.

Related MCP server: Vertex AI MCP Server

How to use

There are two ways to use this MCP server. If you want to run this on Docker, the first approach would be good as Dockerfile is provided in the project.

1. Clone the repository

# Clone the repository

git clone git@github.com:ubie-oss/mcp-vertexai-search.git

# Create a virtual environment

uv venv

# Install the dependencies

uv sync --all-extras

# Check the command

uv run mcp-vertexai-searchInstall the python package

The package isn't published to PyPI yet, but we can install it from the repository. We need a config file derives from config.yml.template to run the MCP server, because the python package doesn't include the config template. Please refer to Appendix A: Config file for the details of the config file.

# Install the package

pip install git+https://github.com/ubie-oss/mcp-vertexai-search.git

# Check the command

mcp-vertexai-search --helpDevelopment

Prerequisites

Vertex AI data store

Please look into the official documentation about data stores for more information

Set up Local Environment

# Optional: Install uv

python -m pip install -r requirements.setup.txt

# Create a virtual environment

uv venv

uv sync --all-extrasRun the MCP server

This supports two transports for SSE (Server-Sent Events) and stdio (Standard Input Output).

We can control the transport by setting the --transport flag.

We can configure the MCP server with a YAML file. config.yml.template is a template for the config file. Please modify the config file to fit your needs.

uv run mcp-vertexai-search serve \

--config config.yml \

--transport <stdio|sse>Test the Vertex AI Search

We can test the Vertex AI Search by using the mcp-vertexai-search search command without the MCP server.

uv run mcp-vertexai-search search \

--config config.yml \

--query <your-query>Appendix A: Config file

config.yml.template is a template for the config file.

serverserver.name: The name of the MCP server

modelmodel.model_name: The name of the Vertex AI modelmodel.project_id: The project ID of the Vertex AI modelmodel.location: The location of the model (e.g. us-central1)model.impersonate_service_account: The service account to impersonatemodel.generate_content_config: The configuration for the generate content API

data_stores: The list of Vertex AI data storesdata_stores.project_id: The project ID of the Vertex AI data storedata_stores.location: The location of the Vertex AI data store (e.g. us)data_stores.datastore_id: The ID of the Vertex AI data storedata_stores.tool_name: The name of the tooldata_stores.description: The description of the Vertex AI data store

This server cannot be installed

Maintenance

Resources

Unclaimed servers have limited discoverability.

Looking for Admin?

If you are the server author, to access and configure the admin panel.

Latest Blog Posts

MCP directory API

We provide all the information about MCP servers via our MCP API.

curl -X GET 'https://glama.ai/api/mcp/v1/servers/ubie-oss/mcp-vertexai-search'

If you have feedback or need assistance with the MCP directory API, please join our Discord server