MCP-Weather Server

Connects to the DeepSeek platform (which uses the OpenAI API format) to access LLM capabilities through the deepseek-chat model.

Click on "Install Server".

Wait a few minutes for the server to deploy. Once ready, it will show a "Started" state.

In the chat, type

@followed by the MCP server name and your instructions, e.g., "@MCP-Weather Serverwhat's the forecast for Tokyo tomorrow?"

That's it! The server will respond to your query, and you can continue using it as needed.

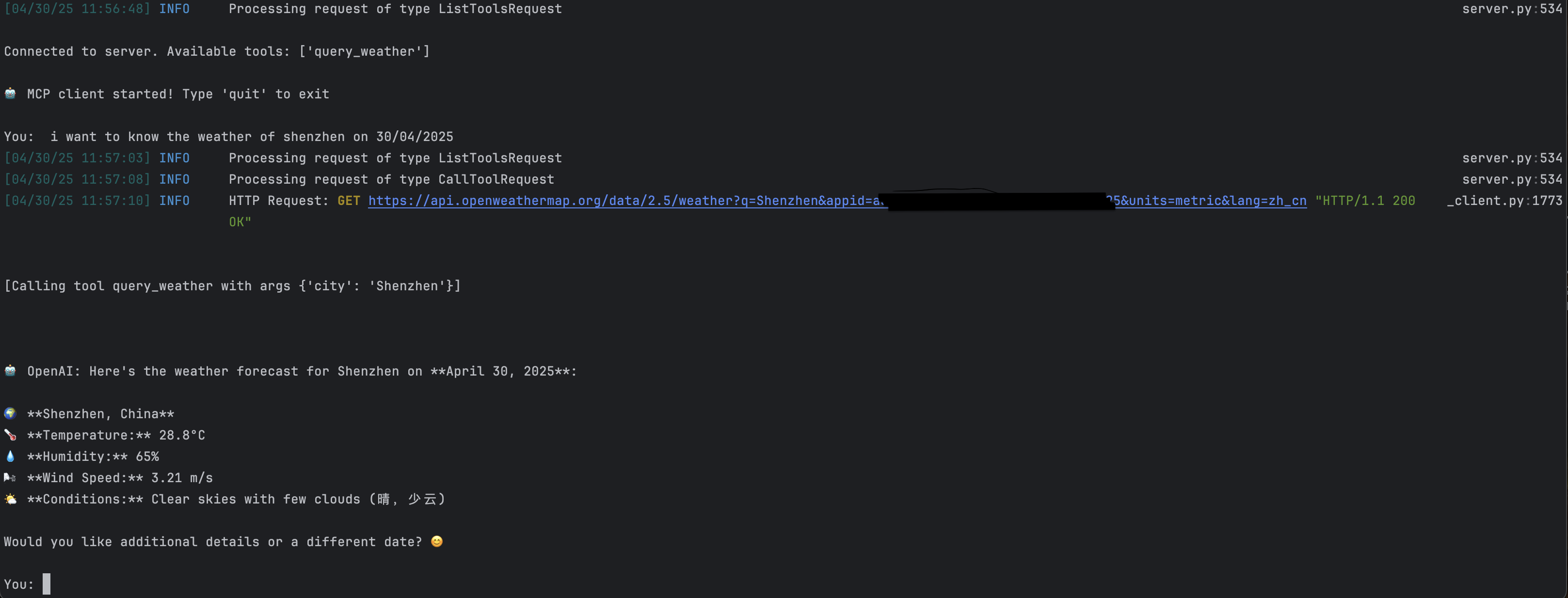

Here is a step-by-step guide with screenshots.

MCP-Augmented LLM for Reaching Weather Information

Overview

This system enhances Large Language Models (LLMs) with weather data capabilities using the Model Context Protocol (MCP) framework.

Related MCP server: OpenWeatherMap MCP Server

Demo

Components

MCP Client: Store LLms

MCP Server: Intermediate agent connecting external tools / resources

Configuration

DeepSeek Platform

BASE_URL=https://api.deepseek.com

MODEL=deepseek-chat

OPENAI_API_KEY=<your_api_key_here>OpenWeather Platform

OPENWEATHER_API_BASE=https://api.openweathermap.org/data/2.5/weather

USER_AGENT=weather-app/1.0

API_KEY=<your_openweather_api_key>Installation & Execution

Initialize project:

uv init weather_mcp

cd weather_mcpwhere weather_mcp is the project file name.

Install dependencies:

uv add mcp httpxLaunch system:

cd ./utils

python client.py server.pyNote: Replace all

<your_api_key_here>placeholders with actual API keys

This server cannot be installed

Resources

Unclaimed servers have limited discoverability.

Looking for Admin?

If you are the server author, to access and configure the admin panel.

Latest Blog Posts

MCP directory API

We provide all the information about MCP servers via our MCP API.

curl -X GET 'https://glama.ai/api/mcp/v1/servers/aaasoulmate/mcp-weather'

If you have feedback or need assistance with the MCP directory API, please join our Discord server