Provides comprehensive tools for managing and analyzing Apache Druid clusters, including data management, ingestion management, monitoring, and health checks. Enables executing SQL queries, managing datasources, compaction, retention rules, segments, and streaming ingestion supervisors.

Supports deployment via Docker with pre-built images available on Docker Hub, offering configuration through environment variables for connecting to Druid clusters with options for both SSE and STDIO transport modes.

Planned future integration for deploying the Druid MCP Server on Kubernetes clusters, mentioned in the roadmap section.

Integrates with PostgreSQL as the metadata storage for Apache Druid clusters, supporting the backend infrastructure for the Druid management capabilities.

Click on "Install Server".

Wait a few minutes for the server to deploy. Once ready, it will show a "Started" state.

In the chat, type

@followed by the MCP server name and your instructions, e.g., "@NL Analytics MCP Server for Apache Druidshow me the top 10 products by sales last month"

That's it! The server will respond to your query, and you can continue using it as needed.

Here is a step-by-step guide with screenshots.

Druid MCP Server

A comprehensive Model Context Protocol (MCP) server for Apache Druid that provides extensive tools, resources, and prompts for managing and analyzing Druid clusters.

Developed by

Overview

This MCP server implements a feature-based architecture where each package represents a distinct functional area of Druid management. The server provides three main types of MCP components:

Tools - Executable functions for performing operations

Resources - Data providers for accessing information

Prompts - AI-assisted guidance templates

Related MCP server: AGE-MCP-Server

Video Walkthrough

Learn how to integrate AI agents with Apache Druid using the MCP server. This tutorial demonstrates time series data exploration, statistical analysis, and data ingestion using natural language with AI assistants like Claude, ChatGPT, and Gemini.

Click the thumbnail above to watch the video on YouTube

🧠 Data Philter: AI-Powered UI for Druid

Experience your data like never before with Data Philter, a local-first AI gateway designed by iunera. It leverages this Druid MCP Server to provide a seamless, conversational interface for your Druid cluster.

Natural Language Queries: Ask questions in plain English and get results instantly.

Local & Secure: Runs completely locally with support for Ollama models (or OpenAI).

Plug & Play: Works out-of-the-box with the Development Druid Installation.

Features

Spring AI MCP Server integration

Tool-based architecture for MCP protocol compliance

Tool-based Architecture: Complete MCP protocol compliance with automatic JSON schema generation

Multiple Transport Modes: STDIO, SSE, and Streamable HTTP support including Oauth

Real-time Communication: Server-Sent Events with streaming capabilities

Comprehensive error handling

Customizable Prompt Templates: AI-assisted guidance with template customization

Comprehensive Error Handling: Graceful error handling with meaningful responses

Architecture & Organization

Feature-based Package Organization: Each package represents a distinct Druid management area

Auto-discovery: Automatic registration of tools, resources, and prompts via annotations

Enterprise Ready: Production-grade configuration and security features

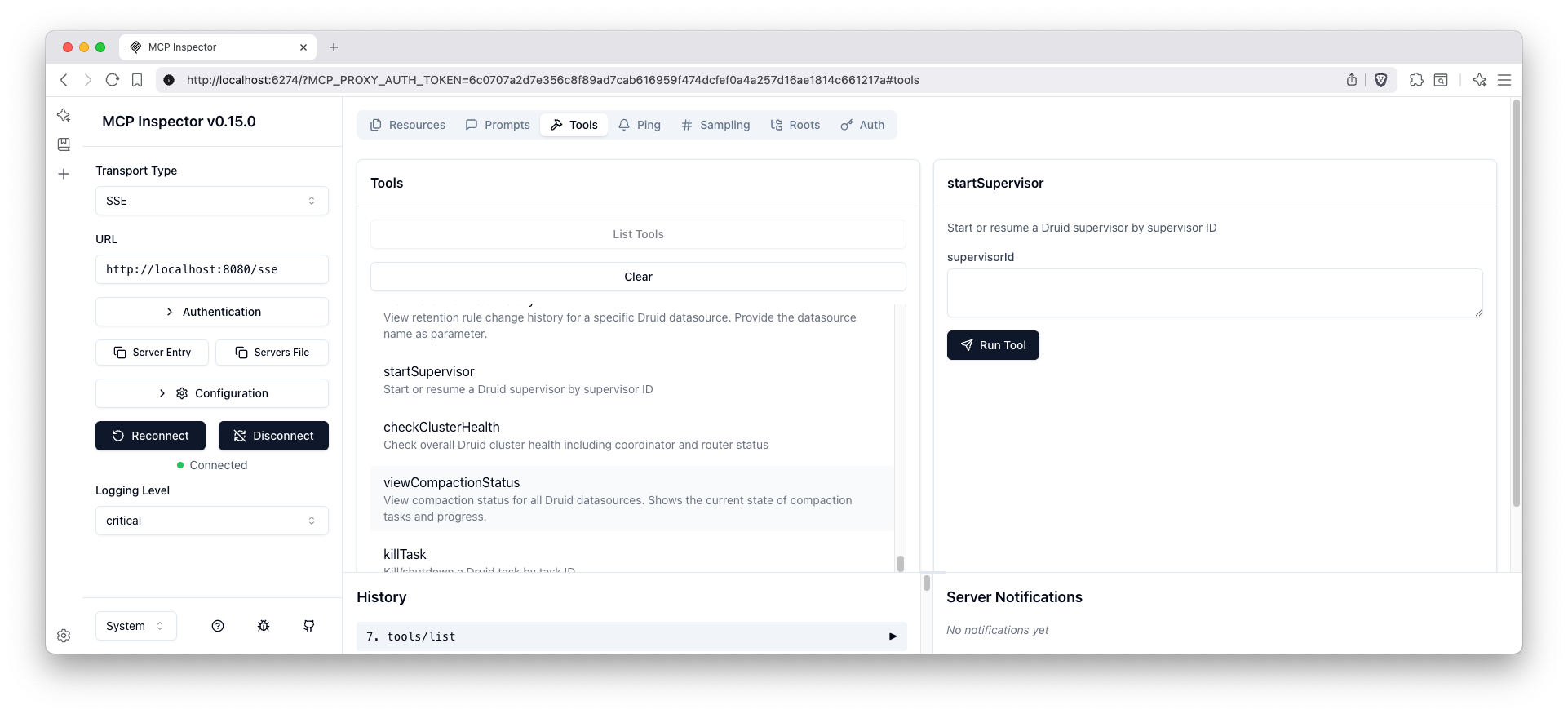

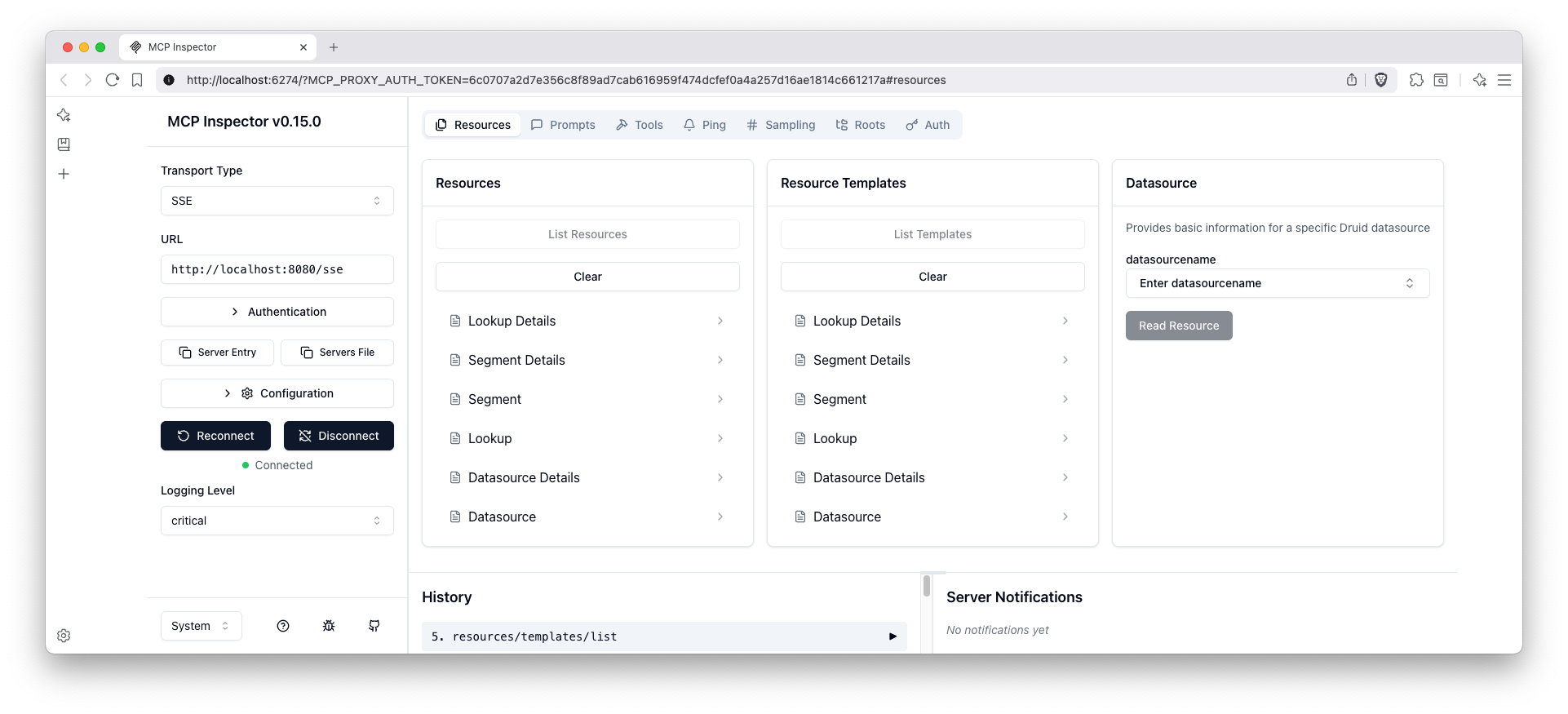

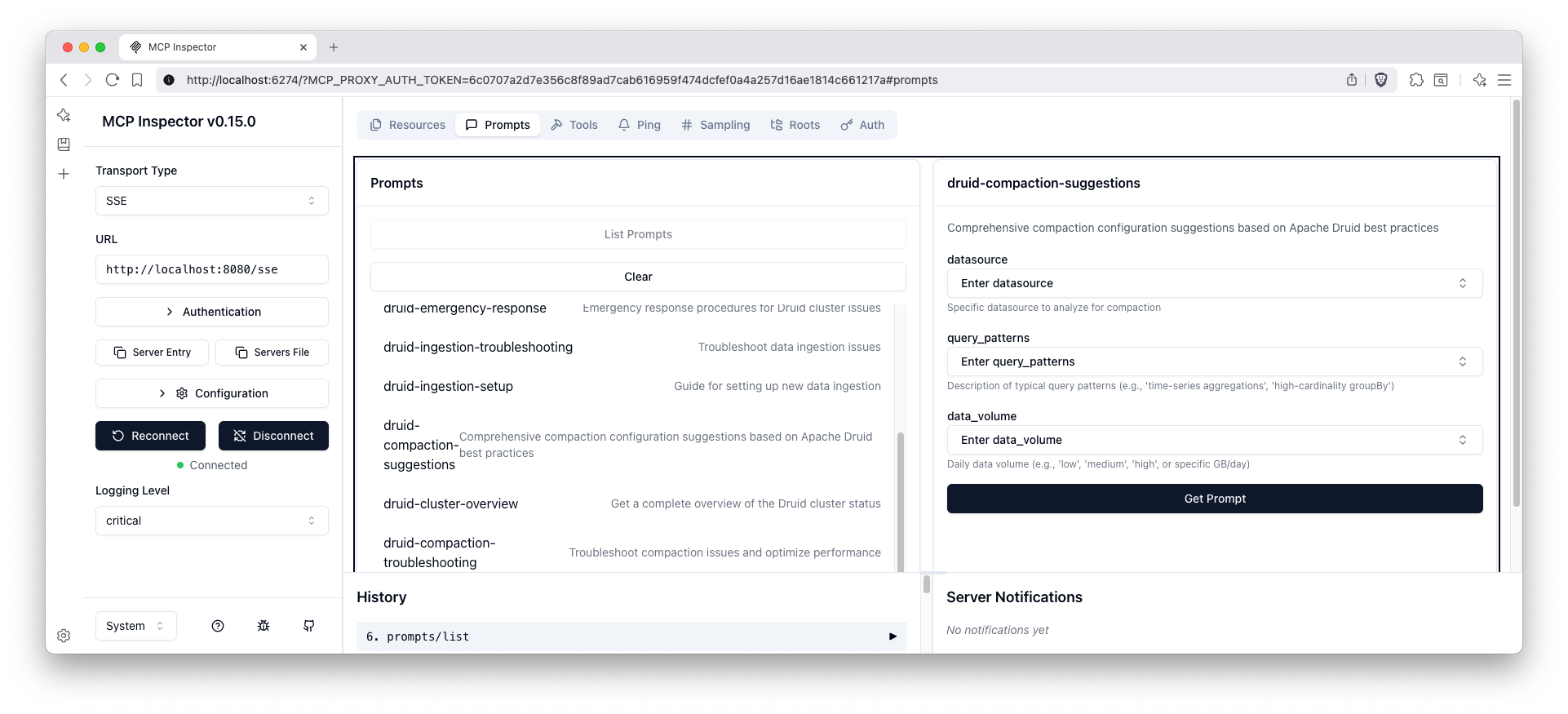

MCP Inspector Interface

When connected to an MCP client, you can inspect the available tools, resources, and prompts through the MCP inspector interface:

Available Tools

The tools interface shows all available Druid management functions organized by feature areas including data management, ingestion management, and monitoring & health.

Available Resources

The resources interface displays all accessible Druid data sources and metadata that can be retrieved through the MCP protocol.

Available Prompts

The prompts interface shows all AI-assisted guidance templates available for various Druid management tasks and data analysis workflows.

Quick Start

MCP Configuration for LLMs

A ready-to-use MCP configuration file is provided at mcp-servers-config.json that can be used with LLM clients to connect to this Druid MCP server.

Examples

The configuration includes both transport options:

STDIO default) (: see examples/stdio/README.md - server is spawned by the MCP client over STDIO.

Streamable HTTP (profile: http) : see examples/streamable-http/README.md - single /mcp endpoint per MCP 2025-06-18.

Docker examples using environment variables:

Note on Spring profiles:

Default profile: stdio (no SPRING_PROFILES_ACTIVE needed)

HTTP profile: set SPRING_PROFILES_ACTIVE=http to enable Streamable HTTP at /mcp

Prerequisites

Java 24

Maven 3.6+

Apache Druid cluster running with router on port 8888

Build and Run

The server will start on port 8080 by default.

For detailed build instructions, testing, Docker setup, and development guidelines, see development.md.

Security & Authentication

Streamable HTTP and SSE transports are secured with OAuth 2.0 by default.

Clients must send a valid Bearer token in the Authorization header when connecting.

Example: Authorization: Bearer YOUR_JWT_TOKEN

Environment Variables

DRUID_MCP_SECURITY_OAUTH2_ENABLED:Description: Enables or disables OAuth2 security for client authentication.

Type: Boolean

Default:

true(OAuth2 is enabled by default as per the text above)Usage: Set to

falseto disable OAuth2 authentication. When disabled, clients can access the server without providing OAuth2 tokens.

For enterprise SSO integration (OpenID Connect, Azure AD, Keycloak, etc.), please send an inquiry to consulting@iunera.com and see Contact & Support.

Installation from Maven Central

If you prefer to use the pre-built JAR without building from source, you can download and run it directly from Maven Central.

Prerequisites

Java 24 JRE only

Download and Run

Download the JAR from Maven Central https://repo.maven.apache.org/maven2/com/iunera/druid-mcp-server/

For Developers

For detailed development information including build instructions, testing guidelines, architecture details, and contributing guidelines, see development.md.

Available Tools by Feature

The MCP server auto-discovers all tools via annotations. In Read-only mode, any tool that would modify the Druid cluster is not registered and will not appear in the MCP client. The lists below reflect the current implementation.

Data Management

Feature | Tool | Description | Parameters |

Datasource |

| List all available Druid datasource names | None |

Datasource |

| Show detailed information for a specific datasource including column information |

|

Datasource |

| Kill a datasource permanently, removing all data and metadata |

|

Lookup |

| List all available Druid lookups from the coordinator | None |

Lookup |

| Get configuration for a specific lookup |

|

Lookup |

| Update configuration for a specific lookup |

|

Segments |

| List all segments across all datasources | None |

Segments |

| Get metadata for specific segments |

|

Segments |

| Get all segments for a specific datasource |

|

Query |

| Execute a SQL query against Druid datasources |

|

Retention |

| View retention rules for all datasources or a specific one |

|

Retention |

| Update retention rules for a datasource |

|

Compaction |

| View compaction configurations for all datasources | None |

Compaction |

| View compaction configuration for a specific datasource |

|

Compaction |

| Edit compaction configuration for a datasource |

|

Compaction |

| Delete compaction configuration for a datasource |

|

Compaction |

| View compaction status for all datasources | None |

Compaction |

| View compaction status for a specific datasource |

|

Ingestion Management

Feature | Tool | Description | Parameters |

Ingestion Spec |

| Create a batch ingestion template |

|

Ingestion Spec |

| Create and submit an ingestion specification |

|

Supervisors |

| List all streaming ingestion supervisors | None |

Supervisors |

| Get status of a specific supervisor |

|

Supervisors |

| Suspend a streaming supervisor |

|

Supervisors |

| Start or resume a streaming supervisor |

|

Supervisors |

| Terminate a streaming supervisor |

|

Tasks |

| List all ingestion tasks | None |

Tasks |

| Get status of a specific task |

|

Tasks |

| Shutdown a running task |

|

Monitoring & Health

Feature | Tool | Description | Parameters |

Basic Health |

| Check overall cluster health status | None |

Basic Health |

| Get status of specific Druid services |

|

Basic Health |

| Get cluster configuration information | None |

Diagnostics |

| Run comprehensive cluster diagnostics | None |

Diagnostics |

| Analyze cluster performance issues | None |

Diagnostics |

| Generate detailed health report | None |

Functionality |

| Test query functionality across services | None |

Functionality |

| Test ingestion functionality | None |

Functionality |

| Validate connectivity between cluster components | None |

Basic Security

Feature | Tool | Description | Parameters |

Authentication |

| List all users in the Druid authentication system for a specific authenticator |

|

Authentication |

| Get details of a specific user from the Druid authentication system |

|

Authentication |

| Create a new user in the Druid authentication system |

|

Authentication |

| Delete a user from the Druid authentication system. Use with caution as this action cannot be undone. |

|

Authentication |

| Set or update the password for a user in the Druid authentication system |

|

Authorization |

| List all users in the Druid authorization system for a specific authorizer |

|

Authorization |

| Get details of a specific user from the Druid authorization system including their roles |

|

Authorization |

| List all roles in the Druid authorization system for a specific authorizer |

|

Authorization |

| Get details of a specific role from the Druid authorization system including its permissions |

|

Authorization |

| Create a new user in the Druid authorization system |

|

Authorization |

| Delete a user from the Druid authorization system. Use with caution as this action cannot be undone. |

|

Authorization |

| Create a new role in the Druid authorization system |

|

Authorization |

| Delete a role from the Druid authorization system. Use with caution as this action cannot be undone. |

|

Authorization |

| Set permissions for a role in the Druid authorization system. Provide permissions as JSON array. |

|

Authorization |

| Assign a role to a user in the Druid authorization system |

|

Authorization |

| Unassign a role from a user in the Druid authorization system |

|

Configuration |

| Get configured authenticatorChain and authorizers form the Basic Auth configuration. This information is important for any other security tool and LLMs need to call this tool first. | None |

Available Resources by Feature

Feature | Resource URI Pattern | Description | Parameters |

Datasource |

| Access datasource information and metadata |

|

Datasource |

| Access detailed datasource information including schema |

|

Lookup |

| Access lookup configuration and data |

|

Segments |

| Access segment metadata and information |

|

Available Prompts by Feature

Feature | Prompt Name | Description | Parameters |

Data Analysis |

| Guide for exploring data in Druid datasources |

|

Data Analysis |

| Help optimize Druid SQL queries for better performance |

|

Cluster Management |

| Comprehensive cluster health assessment guidance | None |

Cluster Management |

| Overview and analysis of cluster status | None |

Ingestion Management |

| Troubleshoot ingestion issues |

|

Ingestion Management |

| Guide for setting up new ingestion pipelines |

|

Retention Management |

| Manage data retention policies |

|

Compaction |

| Optimize segment compaction configuration |

|

Compaction |

| Troubleshoot compaction issues |

|

Operations |

| Emergency response procedures and guidance | None |

Operations |

| Cluster maintenance procedures | None |

Environment Variables Configuration

The application can be configured using environment variables, which is the recommended approach for production environments. Below is a comprehensive list of supported environment variables derived from the application.yaml configuration file.

Druid Connection

DRUID_ROUTER_URL: The URL of the Druid router.DRUID_AUTH_USERNAME: The username for Druid authentication.DRUID_AUTH_PASSWORD: The password for Druid authentication.DRUID_SSL_ENABLED: Enables or disables SSL for Druid connections (true/false).DRUID_SSL_SKIP_VERIFICATION: Skips SSL certificate verification (true/false).

MCP Server Configuration

DRUID_MCP_SECURITY_OAUTH2_ENABLED: Enables or disables OAuth2 security for client authentication (true/false).DRUID_MCP_READONLY_ENABLED: Enables or disables read-only mode (true/false).DRUID_EXTENSION_DRUID_BASIC_SECURITY_ENABLED: Enables or disables the basic security feature (true/false). When disabled, basic security tools are not registered.SPRING_AI_MCP_SERVER_NAME: The name of the MCP server.SPRING_AI_MCP_SERVER_PROTOCOL: The protocol used by the MCP server (e.g.,streamable).

General Server Configuration

SERVER_PORT: The port the server listens on.SERVER_SERVLET_SESSION_COOKIE_NAME: The name of the session cookie.SPRING_APPLICATION_NAME: The name of the application.SPRING_CONFIG_IMPORT: Imports additional configuration files.SPRING_MAIN_BANNER_MODE: The mode for the startup banner (e.g.,off).

Logging

LOGGING_FILE_NAME: The name of the log file.LOGGING_LEVEL_ORG_SPRINGFRAMEWORK_SECURITY: The log level for Spring Security (e.g.,DEBUG).

SSL-Encrypted Cluster with Authentication

This section provides comprehensive guidance on connecting to SSL-encrypted Druid clusters with username and password authentication.

Prerequisites

SSL-enabled Druid cluster with HTTPS endpoints

Valid username and password credentials for Druid authentication

SSL certificates properly configured (or ability to skip verification for testing)

Configuration Methods

Method 1: Environment Variables (Recommended for Production)

Set the following environment variables before starting the MCP server:

Method 2: Runtime System Properties

Pass configuration as JVM system properties:

SSL Configuration Options

Production SSL Setup

For production environments with valid SSL certificates:

The server will use the system's default truststore to validate SSL certificates.

Authentication Methods

The MCP server supports HTTP Basic Authentication with username and password:

Username: Set via

DRUID_AUTH_USERNAMEordruid.auth.usernamePassword: Set via

DRUID_AUTH_PASSWORDordruid.auth.password

The credentials are automatically encoded using Base64 and sent with each request using the Authorization: Basic header.

MCP Client Configuration with SSL

Update your mcp-servers-config.json to include environment variables:

MCP Prompt Customization

The server provides extensive prompt customization capabilities through the prompts.properties file located in src/main/resources/.

Prompt Configuration Structure

The prompts.properties file contains:

Global Settings: Enable/disable prompts and set watermarks

Feature Toggles: Control which prompts are available

Custom Variables: Organization-specific information

Template Definitions: Full prompt templates for each feature

Overriding Prompts

You can override any prompt template using Java system properties with the -D flag:

Method 1: System Properties (Runtime Override)

Method 2: Custom Properties File

Create a custom properties file (e.g.,

custom-prompts.properties):

Load it at runtime:

Available Prompt Variables

All prompt templates support these variables:

Variable | Description | Example |

| Current environment name |

|

| Organization name |

|

| Contact information |

|

| Generated watermark |

|

| Datasource name (context-specific) |

|

| SQL query (context-specific) |

|

Prompt Template Examples

Custom Data Exploration Prompt

Custom Query Optimization Prompt

Disabling Specific Prompts

You can disable individual prompts by setting their enabled flag to false:

Or disable all prompts globally:

MCP Integration

This server uses Spring AI's MCP Server framework and supports both STDIO and SSE transports. The tools, resources, and prompts are automatically registered and exposed through the MCP protocol.

Transport Modes

The Druid MCP Server supports multiple transport modes compliant with MCP 2025-06-18 specification:

Streamable HTTP Transport (Recommended)

The new Streamable HTTP transport provides enhanced performance and scalability with support for multiple concurrent clients:

Features:

Single Endpoint: One HTTP endpoint handles both POST and GET requests

Multiple Clients: Support for concurrent client connections

Optional SSE Streaming: Server-Sent Events for real-time updates

Enhanced Security: Origin header validation and authentication

Backwards Compatibility: Automatic fallback for older MCP clients

Keep-alive: Configurable connection health monitoring

Security

The Streamable HTTP and SSE modes are secured with OAuth by default. Your MCP client must obtain and send a valid bearer token when connecting.

For enterprise SSO integration (OpenID Connect, Azure AD, Keycloak, etc.), please send an inquiry to consulting@iunera.com and see Contact & Support.

STDIO Transport (Command-line Integration)

Perfect for LLM clients and desktop applications:

Legacy SSE Transport (Deprecated)

Still supported for backwards compatibility. It is no longer the default and may be removed in a future version.

Note: The SSE endpoint is secured with OAuth by default. Clients must include a valid bearer token when connecting. For SSO integration support, see Contact & Support.

Read-only Mode

Read-only mode prevents any operation that could mutate your Druid cluster while still allowing safe read operations and SQL queries. When enabled:

All HTTP GET requests are allowed

HTTP POST is allowed only to the exact path /druid/v2/sql (for SELECT and other read-only SQL)

Any other HTTP method (PUT, PATCH, DELETE) is blocked

Any other POST endpoint (e.g. ingestion/task endpoints) is blocked

MCP write tools are not registered, so they will not appear in your MCP client’s tool list

Enable Read-only Mode

You can enable it using any of the following methods:

application.properties

Environment variable

JVM system property

Docker

What changes in read-only mode?

Tools that would modify the cluster are disabled and won’t be listed by the MCP client. Examples include:

Segment state changes (enableSegment, disableSegment)

Datasource deletion (killDatasource)

Retention rule edits (editRetentionRulesForDatasource)

Compaction config edits (editCompactionConfigForDatasource, deleteCompactionConfigForDatasource)

Lookup changes (createOrUpdateLookup, deleteLookup)

Supervisor control (suspendSupervisor, startSupervisor, terminateSupervisor)

Task control (killTask)

Multi-stage SQL task operations (queryDruidMultiStage, queryDruidMultiStageWithContext, getMultiStageQueryTaskStatus, cancelMultiStageQueryTask)

Ingestion spec submission and templates (createIngestionSpec, createBatchIngestionTemplate)

Basic security changing tools (e.g.,

createAuthenticationUser,deleteAuthenticationUser,setUserPassword,createAuthorizationUser,deleteAuthorizationUser,createRole,deleteRole,setRolePermissions,assignRoleToUser,unassignRoleFromUser)

Read-only-safe tools remain available, including SQL queries (queryDruidSql), metadata and status lookups, health diagnostics, task and segment inspection, etc.

Metrics Collection

To enhance the product and understand usage patterns, this server collects anonymous usage metrics. This data helps prioritize new features and improvements. You can opt-out of anonymous metrics collection by setting the druid.mcp.metrics.enabled to `false.

🐳 Development Druid Installation

For local development, testing, and learning, a complete Docker Compose setup for running a full Apache Druid cluster is available at iunera/druid-local-cluster-installer.

This setup is the recommended way to get a Druid cluster running for use with this MCP server.

Key Features:

Complete Druid Cluster: Includes all core Druid services (Coordinator, Broker, Historical, MiddleManager, Router).

One-Command Install: Automated scripts for macOS, Linux, and Windows.

Cross-Platform: Runs anywhere Docker is available.

Pre-configured: Sensible defaults for local development.

Basic Security Enabled: Pre-configured admin user (

admin/password).Ready for Data Philter: Designed to work out-of-the-box with

iunera/data-philter.

Related Projects

This Druid MCP Server is part of a comprehensive ecosystem of Apache Druid tools and extensions developed by iunera. These complementary projects enhance different aspects of Druid cluster management and data ingestion:

🔧 Druid Cluster Configuration

Advanced configuration management and deployment tools for Apache Druid clusters. This project provides:

Automated Cluster Setup: Streamlined configuration templates for different deployment scenarios

Configuration Management: Best practices and templates for production Druid clusters

Deployment Automation: Tools and scripts for consistent cluster deployments

Environment-Specific Configs: Optimized configurations for development, staging, and production environments

Integration with Druid MCP Server: The cluster configurations provided by this project work seamlessly with the monitoring and management capabilities of the Druid MCP Server, enabling comprehensive cluster lifecycle management.

📊 Code Ingestion Druid Extension

A specialized Apache Druid extension for ingesting and analyzing code-related data and metrics. This extension enables:

Code Metrics Ingestion: Specialized parsers for code analysis data and software metrics

Developer Analytics: Tools for analyzing code quality, complexity, and development patterns

CI/CD Integration: Seamless integration with continuous integration and deployment pipelines

Custom Data Formats: Support for various code analysis tools and formats

Integration with Druid MCP Server: This extension expands the ingestion capabilities that can be managed through the MCP server's ingestion management tools, providing specialized support for code analytics use cases.

Why Use These Together?

Complete Ecosystem: From cluster setup to specialized data ingestion and management

Consistent Architecture: All projects follow similar design principles and integration patterns

Enhanced Capabilities: Each project extends different aspects of the Druid ecosystem

Production Ready: Battle-tested configurations and extensions for enterprise deployments

Roadmap

Druid Auto Compaction: Intelligent automatic compaction configuration

MCP Auto Completion: Enhanced autocomplete functionality with sampling using McpComplete

MCP Notifications: Real-time notifications for MCP operations

Proper Observability: Comprehensive metrics and tracing

Enhanced Monitoring: Advanced cluster monitoring and alerting capabilities

Advanced Analytics: Machine learning-powered insights and recommendations

Kubernetes Support: Proper deployment on Kubernetes

About iunera

This Druid MCP Server is developed and maintained by iunera, a leading provider of advanced AI and data analytics solutions.

iunera specializes in:

AI-Powered Analytics: Cutting-edge artificial intelligence solutions for data analysis

Enterprise Data Platforms: Scalable data infrastructure and analytics platforms (Druid, Flink, Kubernetes, Kafka, Spring)

Model Context Protocol (MCP) Solutions: Advanced MCP server implementations for various data systems

Custom AI Development: Tailored AI solutions for enterprise needs

As veterans in Apache Druid iunera deployed and maintained a large number of solutions based on Apache Druid in productive enterprise grade scenarios.

Need Expert Apache Druid Consulting?

Maximize your return on data with professional Druid implementation and optimization services. From architecture design to performance tuning and AI integration, our experts help you navigate Druid's complexity and unlock its full potential.

Need Enterprise MCP Server Development Consulting?

ENTERPRISE AI INTEGRATION & CUSTOM MCP (MODEL CONTEXT PROTOCOL) SERVER DEVELOPMENT

Iunera specializes in developing production-grade AI agents and enterprise-grade LLM solutions, helping businesses move beyond generic AI chatbots. They build secure, scalable, and future-ready AI infrastructure, underpinned by the Model Context Protocol (MCP), to connect proprietary data, legacy systems, and external APIs to advanced AI models.

Get Enterprise MCP Server Development Consulting →

For more information about our services and solutions, visit www.iunera.com.

Contact & Support

Need help? Let

Website: https://www.iunera.com

Professional Services: Contact us through email for Apache Druid enterprise consulting, support and custom development

Open Source: This project is open source and community contributions are welcome

© 2024