AI Charts

Enables the generation of Markdown-formatted summaries and structural descriptions of charts for inclusion in technical documentation.

Allows for the programmatic export of flowcharts, entity-relationship diagrams, and swimlane diagrams into Mermaid syntax for use in external diagramming and documentation tools.

Click on "Install Server".

Wait a few minutes for the server to deploy. Once ready, it will show a "Started" state.

In the chat, type

@followed by the MCP server name and your instructions, e.g., "@AI Chartscreate a flowchart for a user login process with error handling"

That's it! The server will respond to your query, and you can continue using it as needed.

Here is a step-by-step guide with screenshots.

AI Charts

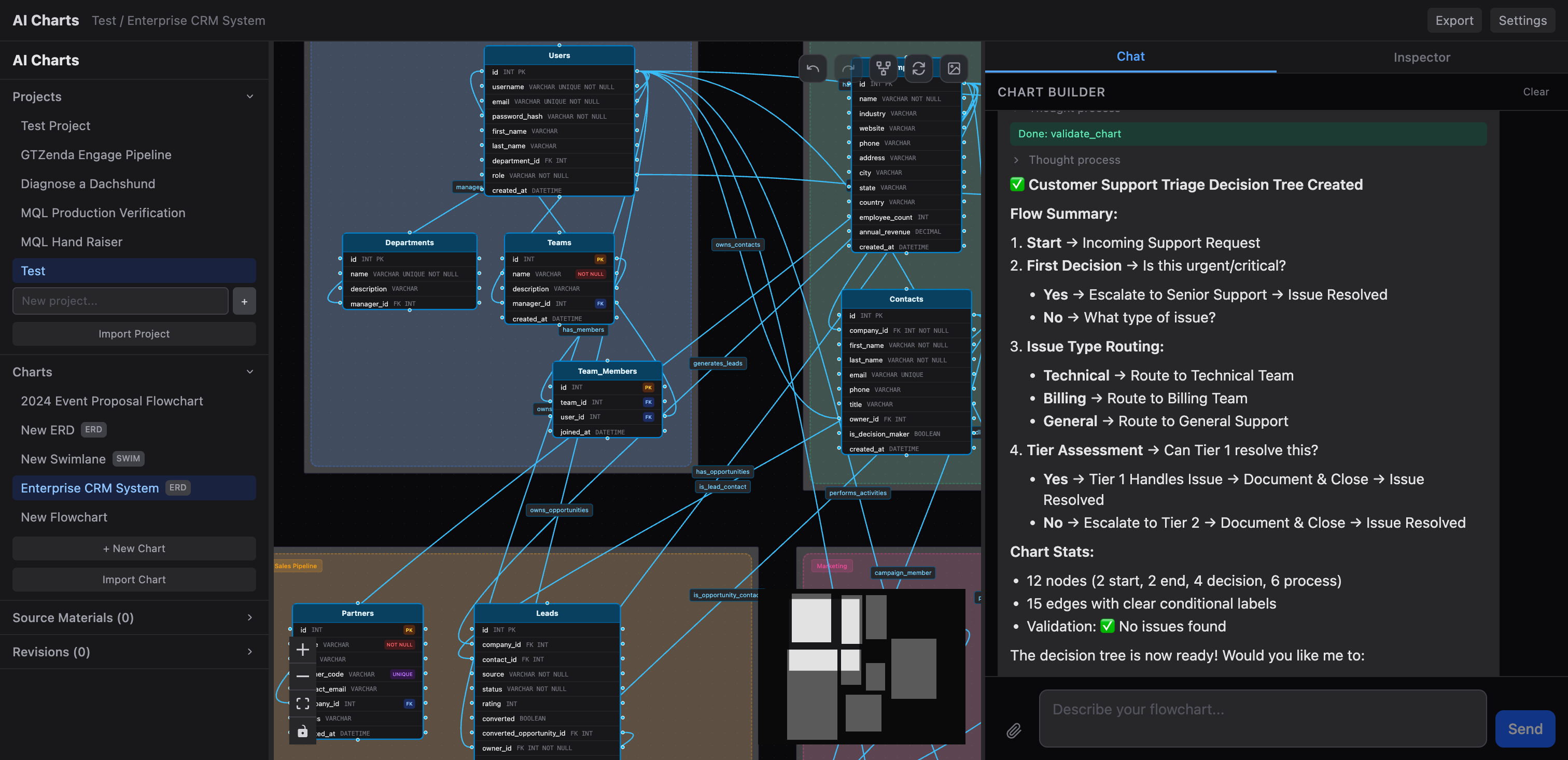

AI-powered flowchart, ERD, and swimlane diagram builder.

AI Charts combines a built-in AI assistant for quick chart creation with an MCP server that lets external AI tools like Claude Desktop and Cursor create and manage charts programmatically. It works with any OpenAI-compatible LLM provider — Ollama, OpenAI, or your own endpoint. No vendor lock-in.

Features

Three chart types — flowcharts, entity-relationship diagrams, and swimlane diagrams

Built-in AI assistant — setup wizard for new projects and a chart builder mode for iterating on diagrams through conversation

MCP server — 18+ tools for external AI integration; connect Claude Desktop, Cursor, or any MCP client to create and manage charts programmatically

Any LLM provider — works with Ollama, OpenAI, or any OpenAI-compatible API

Import/export — portable ZIP project archives, Mermaid syntax, Markdown summaries, and PDF export

Auto-layout & validation — ELK-based auto-layout, cycle detection, reachability analysis, and structural validation

Revision history — full undo/redo with event tracking

Dark-themed UI — built with React Flow for interactive diagram editing

Quick Start

Prerequisites: Bun and an LLM provider (e.g. Ollama)

git clone https://github.com/tjameswilliams/ai-charts.git

cd ai-charts

bun install

bun run db:push

bun run devThe app will be available at http://localhost:5174.

LLM Configuration

Configure your LLM provider through the Settings UI in the app. The default configuration points to a local Ollama instance:

Setting | Default |

API Base URL |

|

API Key |

|

Model |

|

To use OpenAI, set the base URL to https://api.openai.com/v1, add your API key, and choose a model like gpt-4o. Any OpenAI-compatible endpoint works the same way.

MCP Server

AI Charts exposes an MCP server so external AI tools can create and manage charts programmatically.

Run standalone:

bun run mcpConnect from Claude Desktop — add to your claude_desktop_config.json:

{

"mcpServers": {

"ai-charts": {

"command": "bun",

"args": ["run", "mcp"],

"cwd": "/path/to/ai-charts"

}

}

}Available tools include: list_projects, create_project, list_charts, create_chart, build_chart, add_node, add_edge, delete_node, delete_edge, resize_nodes, get_nodes, get_chart_status, validate_chart, export_mermaid, export_markdown, and more.

Project Structure

client/ React + Vite frontend

src/

components/ UI components

store/ Zustand state management

api/ API client

server/ Bun + Hono backend

routes/ API endpoints

db/ Drizzle ORM schema & migrations

lib/

llm.ts LLM integration & tool definitions

mcp/ MCP server & client manager

export/ Export utilities (Mermaid, Markdown, PDF)

validation.ts Chart validationTech Stack

Runtime: Bun

Backend: Hono, SQLite via Drizzle ORM

Frontend: React 19, React Flow, Zustand, Tailwind CSS 4

AI: OpenAI-compatible chat completions, Model Context Protocol

Layout: ELK via elkjs

License

This server cannot be installed

Resources

Unclaimed servers have limited discoverability.

Looking for Admin?

If you are the server author, to access and configure the admin panel.

Latest Blog Posts

MCP directory API

We provide all the information about MCP servers via our MCP API.

curl -X GET 'https://glama.ai/api/mcp/v1/servers/tjameswilliams/ai-charts'

If you have feedback or need assistance with the MCP directory API, please join our Discord server