open-code-review

Provides automated pull request reviews and security scanning for GitHub repositories, including SARIF output for GitHub Code Scanning.

Offers a dedicated GitHub Action to implement a CI/CD quality gate for detecting defects in AI-generated code.

Acts as a quality gate for code generated by GitHub Copilot to detect hallucinations and stale APIs that traditional linters miss.

Integrates with GitLab CI pipelines to provide automated code quality reports and security analysis.

Features specialized detectors for JavaScript to identify hallucinated imports, stale APIs, and over-engineering.

Detects Kotlin-specific anti-patterns such as null-safety abuse and println leaks during automated code reviews.

Verifies JavaScript and TypeScript package imports against the npm registry to detect hallucinated dependencies.

Enables 100% local, privacy-focused code analysis and deep scanning using local LLM models via Ollama.

Utilizes OpenAI-compatible APIs for deep LLM analysis, cross-file coherence checks, and logic bug detection.

Cross-references Python imports with the PyPI database to identify hallucinated or non-existent package declarations.

Includes dedicated scanners for Python to detect bare excepts, eval usage, and hallucinated imports.

Provides deep structural and semantic analysis for TypeScript codebases, including hallucinated npm import detection.

Performs security scanning to detect potential vulnerabilities and anti-patterns related to Unicode usage in source code.

Supports YAML-based configuration for defining quality gate thresholds, SLA levels, and AI provider settings.

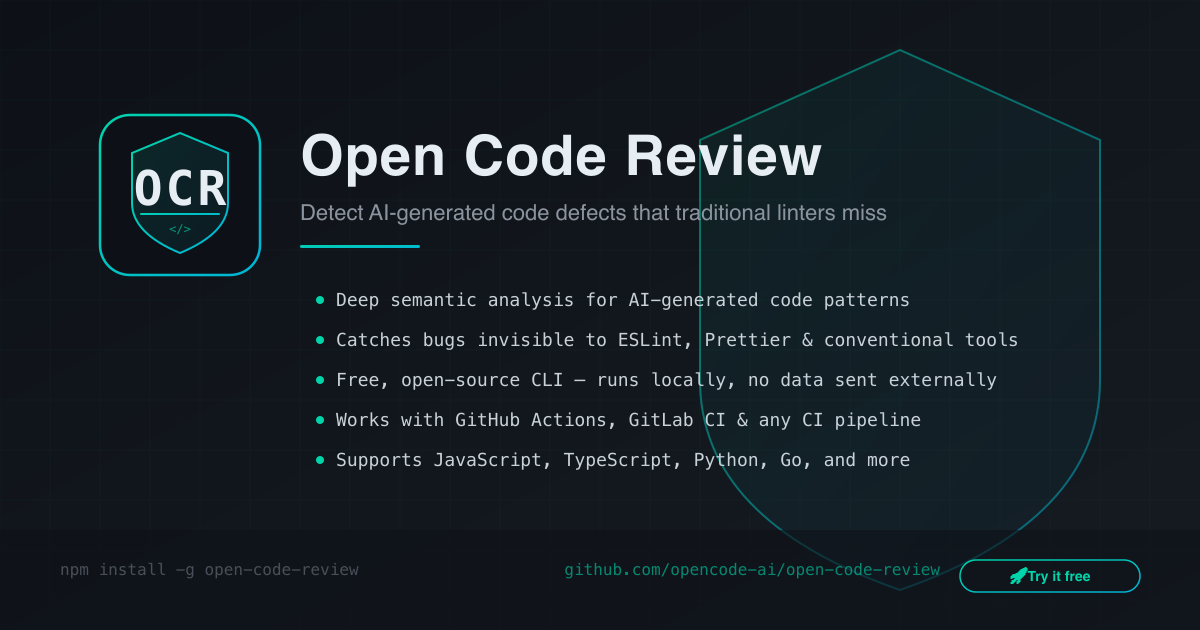

Open Code Review

The first open-source CI/CD quality gate built specifically for AI-generated code. Detects hallucinated imports, stale APIs, over-engineering, and security anti-patterns — powered by local LLMs and any OpenAI-compatible provider. Free. Self-hostable. 6 languages.

Works With

Any AI tool that generates code — if it writes it, OCR reviews it.

What AI Linters Miss

AI coding assistants (Copilot, Cursor, Claude) generate code with defects that traditional tools miss entirely:

Defect | Example | ESLint / SonarQube |

Hallucinated imports |

| ❌ Miss |

Stale APIs | Using deprecated APIs from training data | ❌ Miss |

Context window artifacts | Logic contradictions across files | ❌ Miss |

Over-engineered patterns | Unnecessary abstractions, dead code | ❌ Miss |

Security anti-patterns | Hardcoded example secrets, | ❌ Partial |

Open Code Review detects all of them — across 6 languages, for free.

Demo

📄 View full interactive HTML report

Quick Preview

$ ocr scan src/ --sla L3

╔══════════════════════════════════════════════════════════════╗

║ Open Code Review — Deep Scan Report ║

╚══════════════════════════════════════════════════════════════╝

Project: packages/core/src

SLA: L3 Deep — Structural + Embedding + LLM Analysis

112 issues found in 110 files

Overall Score: 67/100 D

Threshold: 70 | Status: FAILED

Files Scanned: 110 | Languages: typescript | Duration: 12.3sDeep Scan (L3) — How It Works

L3 combines three analysis layers for maximum coverage:

Layer 1: Structural Detection Layer 2: Semantic Analysis Layer 3: LLM Deep Scan

├── Hallucinated imports (npm/PyPI) ├── Embedding similarity recall ├── Cross-file coherence check

├── Stale API detection ├── Risk scoring ├── Logic bug detection

├── Security patterns ├── Context window artifacts ├── Confidence scoring

├── Over-engineering metrics └── Enhanced severity ranking └── AI-powered fix suggestions

└── A+ → F quality scoringPowered by local LLMs or any OpenAI-compatible API. Run Ollama for 100% local analysis, or connect to any remote LLM provider — the interface is the same.

# Local analysis (Ollama)

ocr scan src/ --sla L3 --provider ollama --model qwen3-coder

# Any OpenAI-compatible provider

ocr scan src/ --sla L3 --provider openai-compatible \

--api-base https://your-llm-endpoint/v1 --model your-model --api-key YOUR_KEYAI Auto-Fix — ocr heal

Let AI automatically fix the issues it finds. Review changes before applying.

# Preview fixes without changing files

ocr heal src/ --dry-run

# Apply fixes + generate IDE rules

ocr heal src/ --provider ollama --model qwen3-coder --setup-ide

# Only generate IDE rules (Cursor, Copilot, Augment)

ocr setup src/Multi-Language Detection

Language-specific detectors for 6 languages, plus hallucinated package databases (npm, PyPI, Maven, Go modules):

Language | Specific Detectors |

TypeScript / JavaScript | Hallucinated imports (npm), stale APIs, over-engineering |

Python | Bare |

Java |

|

Go | Unhandled errors, deprecated |

Kotlin |

|

How It Compares

Open Code Review | Claude Code Review | CodeRabbit | GitHub Copilot | |

Price | Free | $15–25/PR | $24/mo/seat | $10–39/mo |

Open Source | ✅ | ❌ | ❌ | ❌ |

Self-hosted | ✅ | ❌ | Enterprise | ❌ |

AI Hallucination Detection | ✅ | ❌ | ❌ | ❌ |

Stale API Detection | ✅ | ❌ | ❌ | ❌ |

Deep LLM Analysis | ✅ | ❌ | ❌ | ❌ |

AI Auto-Fix | ✅ | ❌ | ❌ | ❌ |

Multi-Language | ✅ 6 langs | ❌ | JS/TS | JS/TS |

Registry Verification | ✅ npm/PyPI/Maven | ❌ | ❌ | ❌ |

Unicode Security Detection | ✅ | ❌ | ❌ | ❌ |

SARIF Output | ✅ | ❌ | ❌ | ❌ |

GitHub + GitLab | ✅ Both | GitHub only | Both | GitHub only |

Data Privacy | ✅ 100% local | ❌ Cloud | ❌ Cloud | ❌ Cloud |

Quick Start

# Install

npm install -g @opencodereview/cli

# Fast scan — no AI needed

ocr scan src/

# Deep scan — with local LLM (Ollama)

ocr scan src/ --sla L3 --provider ollama --model qwen3-coder

# Deep scan — with any OpenAI-compatible provider

ocr scan src/ --sla L3 --provider openai-compatible \

--api-base https://your-provider/v1 --model your-model --api-key YOUR_KEYCI/CD Integration

GitHub Actions (30 seconds)

name: Code Review

on: [pull_request]

jobs:

review:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- uses: raye-deng/open-code-review@v1

with:

sla: L1

threshold: 60

github-token: ${{ secrets.GITHUB_TOKEN }}GitLab CI

code-review:

script:

- npx @opencodereview/cli scan src/ --sla L1 --threshold 60 --format json --output ocr-report.json

artifacts:

reports:

codequality: ocr-report.jsonOutput Formats

ocr scan src/ --format terminal # Pretty terminal output

ocr scan src/ --format json # JSON for CI pipelines

ocr scan src/ --format sarif # SARIF for GitHub Code Scanning

ocr scan src/ --format html # Interactive HTML reportConfiguration

# .ocrrc.yml

sla: L3

ai:

embedding:

provider: ollama

model: nomic-embed-text

baseUrl: http://localhost:11434

llm:

provider: ollama

model: qwen3-coder

endpoint: http://localhost:11434

# Or use any OpenAI-compatible provider:

# provider: openai-compatible

# apiBase: https://your-llm-endpoint/v1

# model: your-modelMCP Server — Use in Claude Desktop, Cursor, Windsurf

Integrate Open Code Review directly into your AI IDE via the Model Context Protocol:

npx @opencodereview/mcp-serverClaude Desktop (claude_desktop_config.json):

{

"mcpServers": {

"open-code-review": {

"command": "npx",

"args": ["-y", "@opencodereview/mcp-server"]

}

}

}Cursor / Windsurf / VS Code Copilot: Add the same configuration in your MCP settings.

Available MCP Tools: ocr_scan (quality gate scan), ocr_heal (AI auto-fix), ocr_explain (issue explanation).

💡 Chrome DevTools MCP Compatible: The OCR MCP Server follows the standard Model Context Protocol. Pair it with Google's Chrome DevTools MCP Server for a complete AI-native dev workflow — one inspects your running app, the other inspects your source code.

Project Structure

packages/

core/ # Detection engine + scoring (@opencodereview/core)

cli/ # CLI tool — ocr command (@opencodereview/cli)

mcp-server/ # MCP Server for AI IDEs (@opencodereview/mcp-server)

github-action/ # GitHub Action wrapperWho Is This For?

Teams using AI coding assistants — Copilot, Cursor, Claude Code, Codex, or any LLM-based tool that generates production code

Open-source maintainers — Review AI-generated PRs for hallucinated imports, stale APIs, and security anti-patterns before merging

DevOps / Platform engineers — Add a quality gate to CI/CD pipelines without sending code to cloud services

Security-conscious teams — Run everything locally (Ollama), keep your code on your machines

Solo developers — Free, fast, and works with zero configuration (

npx @opencodereview/cli scan src/)

Featured On

License

BSL-1.1 — Free for personal and non-commercial use. Converts to Apache 2.0 on 2030-03-11. Commercial use requires a Team or Enterprise license.

Star this repo if you find it useful — it helps more than you think!

Maintenance

Latest Blog Posts

MCP directory API

We provide all the information about MCP servers via our MCP API.

curl -X GET 'https://glama.ai/api/mcp/v1/servers/raye-deng/open-code-review'

If you have feedback or need assistance with the MCP directory API, please join our Discord server